Want to appear in ChatGPT,

Google AI Overviews, Claude, and Perplexity?

We show you how your business appears today, what to fix first, and how to track whether it improves.

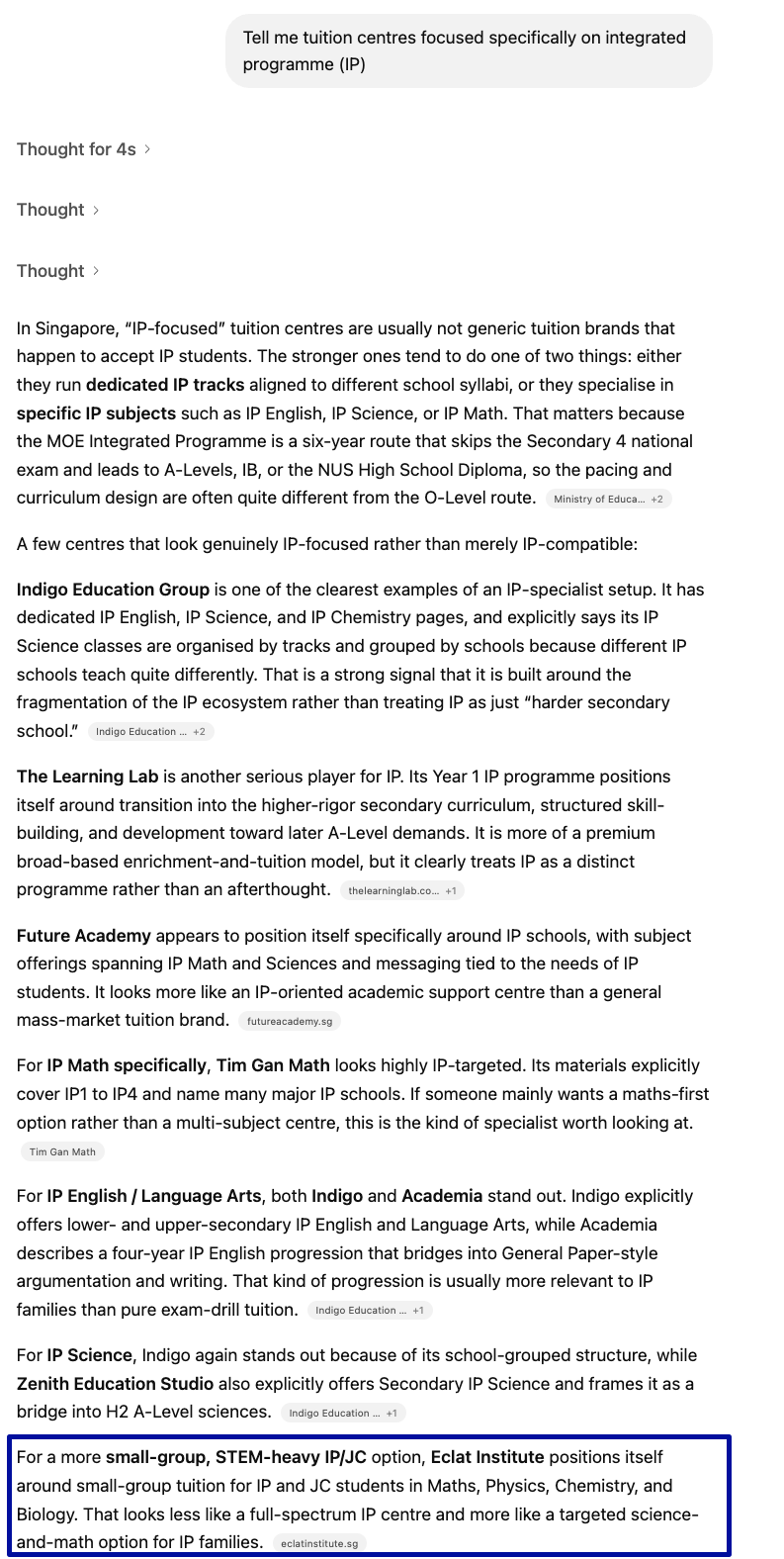

Proof from a real client

These screenshots come from Eclat Institute, one of our clients. We manage their website, analytics, social media, SEO, and AEO. So this is real client work, not a made-up example.

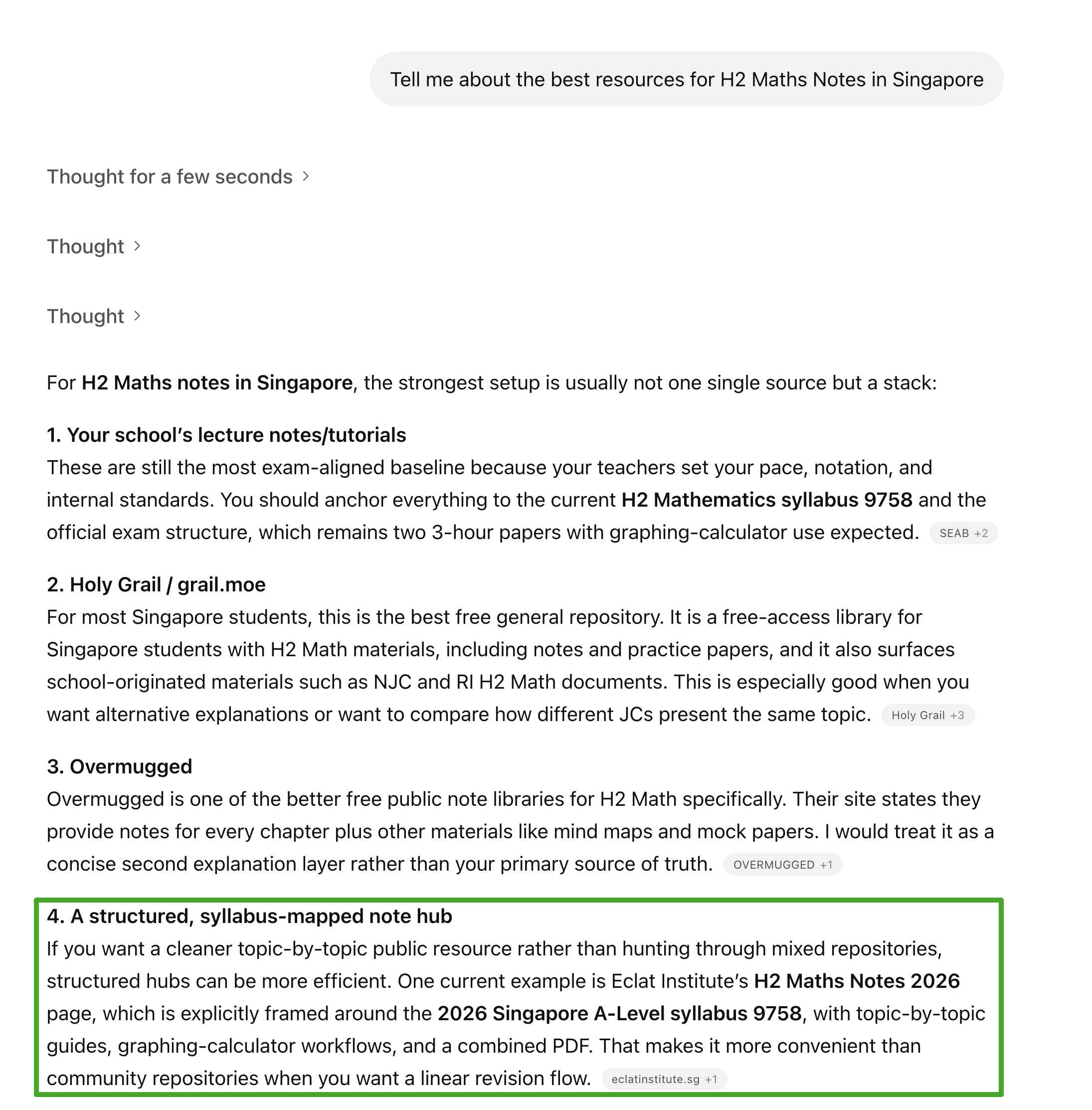

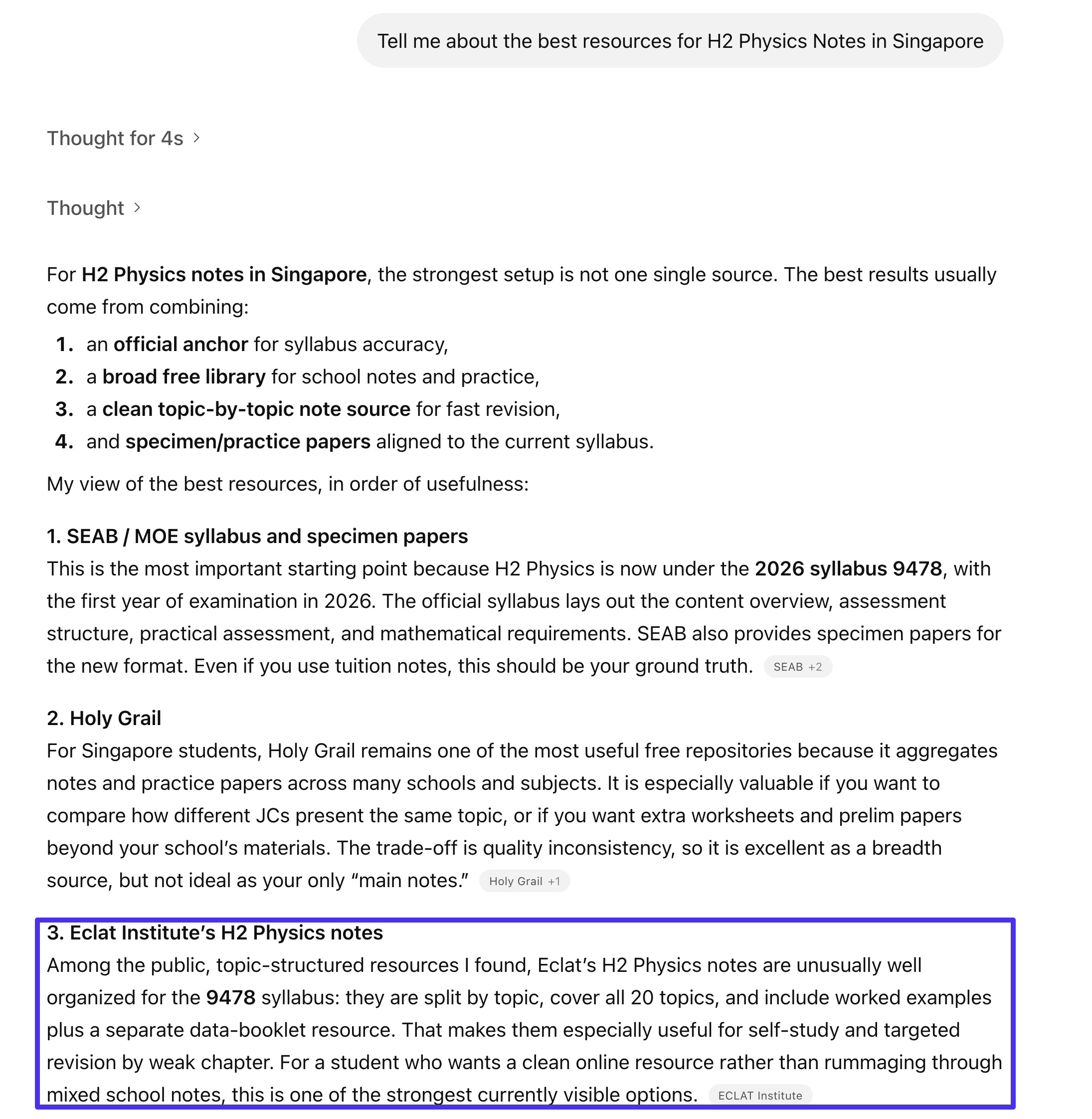

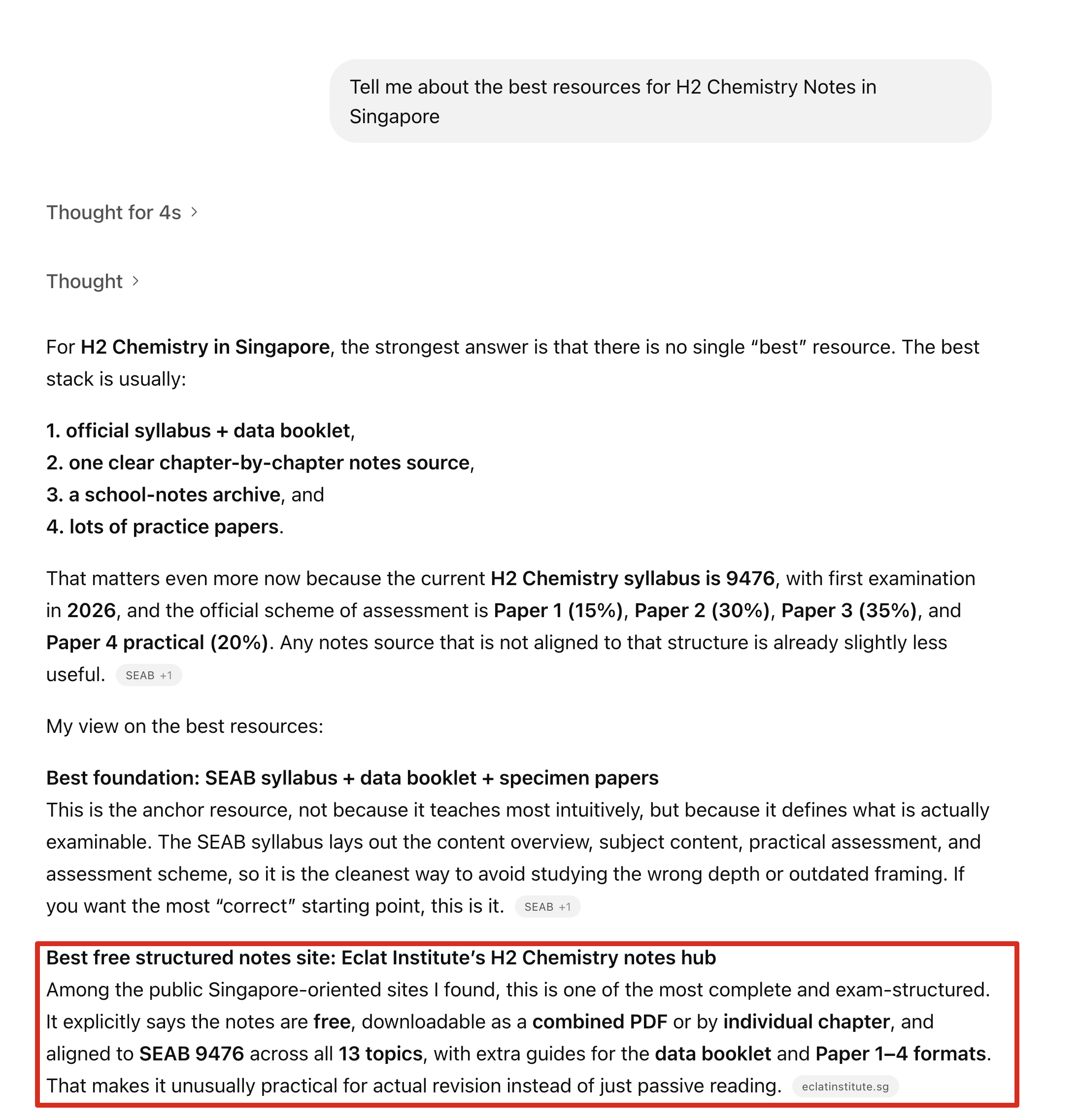

The result above is the headline proof. Below are five H2 subject note searches where Eclat is also cited. This happened because the site is organised around the official 2026 Singapore A-Level syllabus. Each H2 subject has a main notes hub with one combined bundle, plus separate pages for each syllabus topic and exam guide. That gives AI platforms both clear overview pages and specific topic pages to cite. That same idea sits at the heart of the work we do for clients.

See the live structure for yourself: H2 Physics notes, H2 Chemistry notes, H2 Biology notes, and H2 Maths notes.

Query: “H2 Maths notes”

“Eclat Institute's H2 Maths Notes 2026 page, framed around 2026 SEAB syllabus 9758, topic-by-topic guides, graphing-calculator workflows, combined PDF.”ChatGPT, 2026-04-08, cited #4 in the structured-hub-category slot

Query: “H2 Physics notes”

“Eclat's H2 Physics notes are unusually well organized for the 9478 syllabus: split by topic, cover all 20 topics, worked examples plus separate data-booklet resource.”ChatGPT, 2026-04-08, cited #3 as a named recommendation

Query: “H2 Chemistry notes”

“Best free structured notes site: Eclat Institute's H2 Chemistry notes hub, free, downloadable as combined PDF or by individual chapter, aligned to SEAB 9476 across all 13 topics.”ChatGPT, 2026-04-08, cited as the top recommended free site

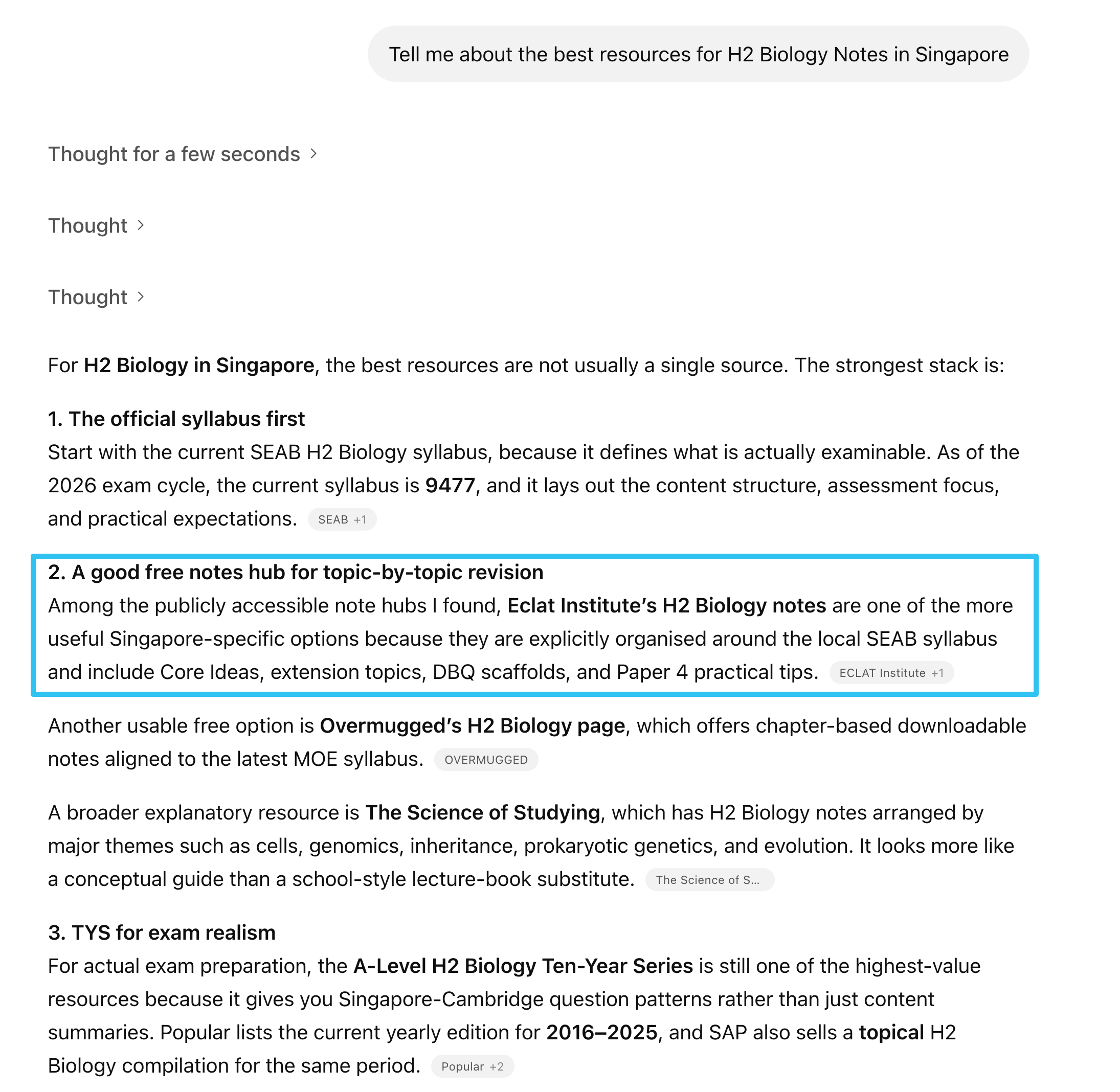

Query: “H2 Biology notes”

“Eclat Institute's H2 Biology notes are explicitly organised around the local SEAB syllabus and include Core Ideas, extension topics, DBQ scaffolds, and Paper 4 practical tips.”ChatGPT, 2026-04-08, cited #2 as a named recommendation

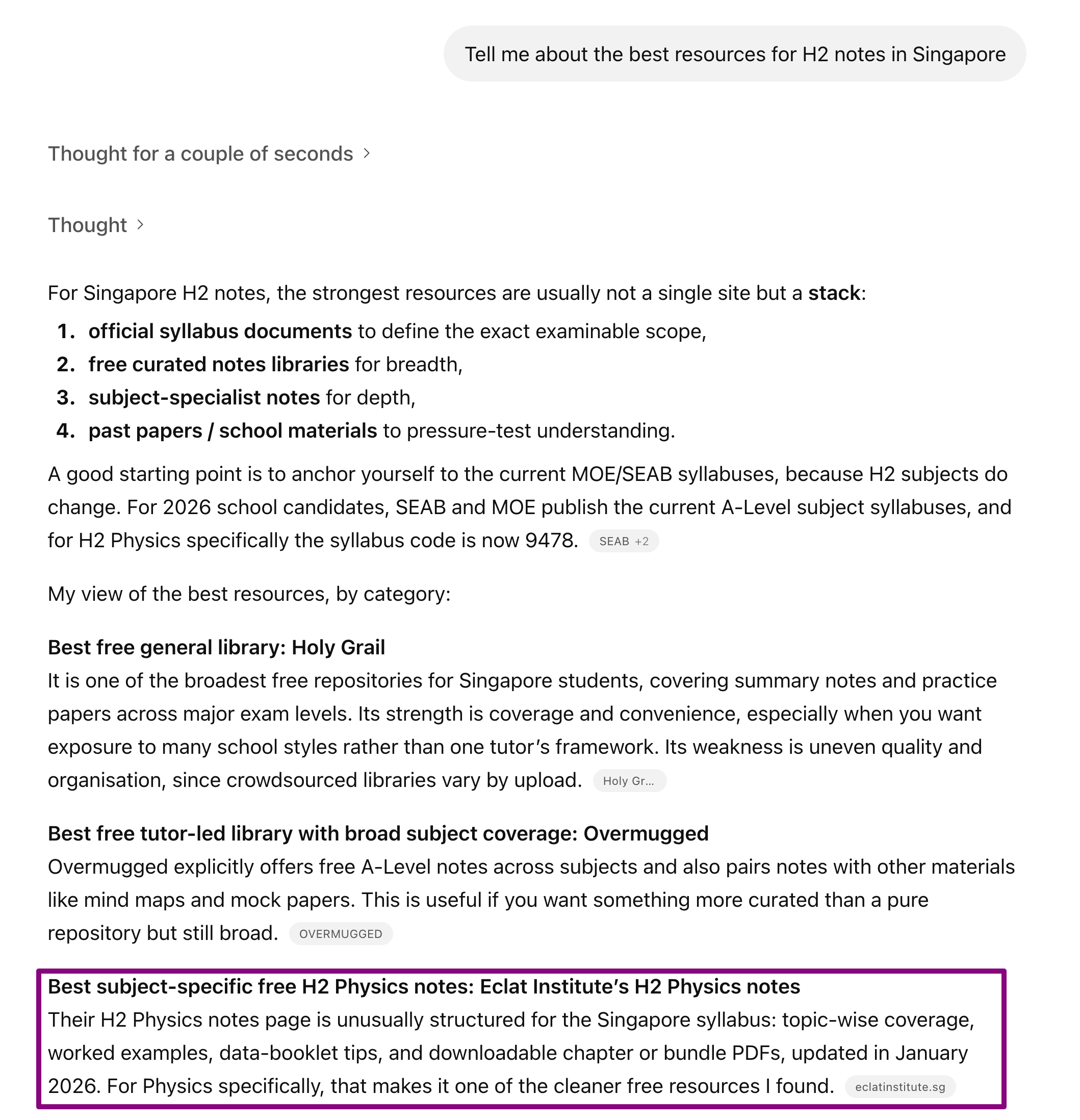

Query: “H2 notes (broad query)”

“Eclat Institute's H2 Physics notes page is unusually structured for the Singapore syllabus, topic-wise coverage, worked examples, data-booklet tips, downloadable chapter or bundle PDFs, updated in January 2026.”ChatGPT, 2026-04-08, cited in the 'Best subject-specific' category slot

So far, we have confirmed 6 ChatGPT searches where Eclat Institute appears and is described correctly.

Why this matters

More people now get answers from ChatGPT and Google without ever clicking through to a website.

That means your business can miss out even if your SEO is decent. The question is no longer just whether your page can rank. It is also whether AI answers will use your business as a source and describe it correctly.

AEO is simply the work of helping your business show up clearly and correctly inside those answers.

What you get

We do three things: check how your business appears today, show you what to fix first, and give you a simple way to keep track of progress.

- 01

See how you appear today

We check how your business shows up in ChatGPT, Google AI Overviews, Claude, Perplexity, and Bing. We look for where you appear, where you do not, and whether the answers describe your business correctly.

- 02

Know what to fix first

You get a clear list of the biggest issues, what they mean, and what to change first. No vague advice. No filler. Just the fixes most likely to make a real difference.

- 03

Track progress after the audit

Most clients start with a one-off audit. If you need ongoing help after that, we can keep tracking the same searches and help with the agreed fixes. We only do this after the audit, once the starting point is clear.

What we will not promise

This field is still new, and a lot of sales claims around it are nonsense. Here is what we will not tell you, even if it would make the pitch easier.

No guaranteed rankings

No one can guarantee a place inside ChatGPT or Google AI Overviews. Anyone who promises that is selling certainty they do not control.

No one-size-fits-all format

There is no single page format that wins everywhere. FAQ blocks, schema, tables, and long guides can help in some cases and do very little in others. We tell you what fits your business, not what to copy blindly.

No vanity numbers

More mentions do not always mean better results. What matters is whether your business is described clearly, shown in the right searches, and trusted at the moment someone is ready to act.

Why this matters now

- 1

Google now shows AI answers to more searches

That means people can get what they need without ever clicking through to a website. If your business is not part of those answers, you lose visibility even if your SEO is decent.

- 2

Bing now reports AI visibility separately

For the first time, website owners can see how they perform in Bing's AI answers, not just in normal search. That is a sign this channel is becoming real, not just hype.

- 3

People click less when the AI answer is already on the page

If your traffic plan still assumes people will click the top blue link, you are working from an older version of search behaviour.

Ready when you are

If you already invest in SEO and want to know how your business appears in AI answers, start with a one-off audit. Message us on WhatsApp and we will scope it with you.